|

3/18/2023 0 Comments Apache airflow tutorial

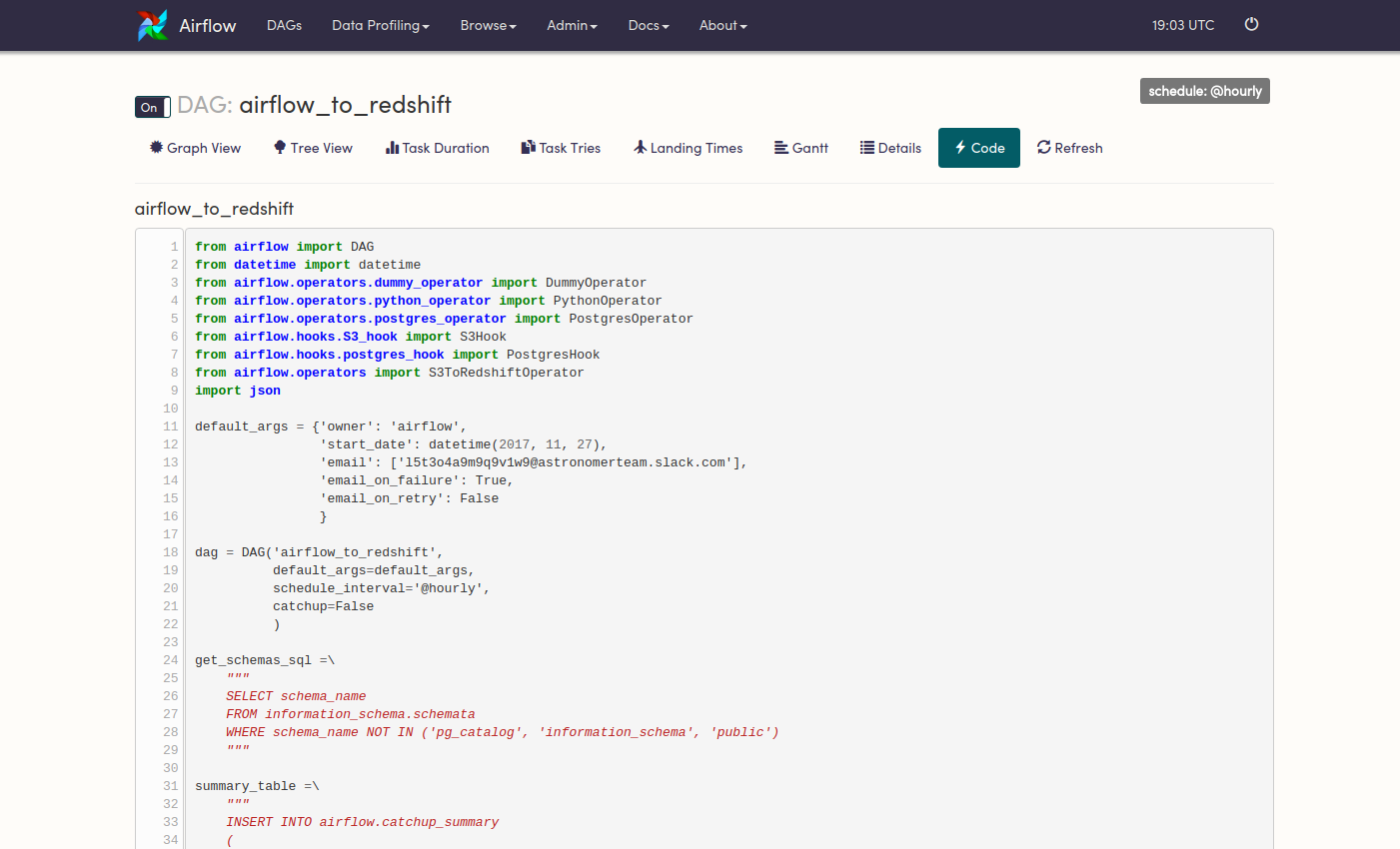

Which are used to populate the run schedule with task instances from this dag. The date range in this context is a start_date and optionally an end_date, initialize the database tables airflow db init print the list of active DAGs airflow dags list prints the list of tasks in the 'tutorial' DAG airflow tasks list tutorial prints the hierarchy of tasks in the 'tutorial' DAG airflow tasks list tutorial -tree. To also wait for all task instances immediately downstream of the previous Let's run a few commands to validate this script further. Of its previous task_instance, wait_for_downstream=True will cause a task instance While depends_on_past=True causes a task instance to depend on the success You may also want to consider wait_for_downstream=True when using depends_on_past=True. Will disregard this dependency because there would be no Task instances with execution_date=start_date Then it gives you all kinds of amazing logging, reporting, and a nice graphical view of your analyses. It groups tasks into analyses, and defines a logical template for when these analyses should be run. This tutorial will be broken down into the following steps: Sign up for Google Cloud Platform and create a compute instance Pull tutorial contents from Github Overview of ML pipeline in AirFlow Install Docker & set up virtual hosts using nginx Build and run a Docker container Open Airflow UI and run ML pipeline Run deployed web app 1. Will depend on the success of their previous task instance (that is, previousĪccording to execution_date). Briefly, Apache Airflow is a workflow management system (WMS).

Note that if you use depends_on_past=True, individual task instances airflow webserver will start a web server if youĪre interested in tracking the progress visually as your backfill progresses. Activate the virtual environment: Both Python 2 and 3 are be supported. If you do have a webserver up, you will be able Create the virtual environment from environment. Smart Sensors: It allows to reduce the number of occupied workers by over 50%įor more details and changes regarding authoring DAGs in Airflow 2.0, check out Tomasz Urbaszek’s article for the official Airflow publication, Astronomer’s post, or Anna Anisienia’s article on Towards Data Science.From datetime import timedelta from textwrap import dedent # The DAG object we'll need this to instantiate a DAG from airflow import DAG # Operators we need this to operate! from import BashOperator from import days_ago # These args will get passed on to each operator # You can override them on a per-task basis during operator initialization default_args =, ) t1 > Įverything looks like it's running fine so let's run a backfill.īackfill will respect your dependencies, emit logs into files and talk to.Better Scheduler: faster Scheduler and the support to run multiples Schedulers.New UI: a refreshed UI with a simple and modern look, also include the Auto-refresh feature.Full REST API: For example to externally trigger a DAG run also the API implements CRUD operations.Some examples are the implementation of a PythonOperator which executes a piece of Python code, or BashOperator, which executes a Bash command.Īirflow 2 was launched in December 2020 with a bunch of new functionalities here are some important changes: Important Google Cloud offers $300 in credits for first time users.įirst, let's start with some concepts and then go deep with a simple Airflow deployment on a compute engine instance.Ī DAG is a collection of all the tasks organized in a way that reflects their relationships and dependencies.Ī Task is a unit of work within a DAG. I suggest going with this if you or your team require a full production or development environment since Composer demands a minimum of 3 nodes (Compute Engines) and other GCP services so the billing could be an obstacle if you are starting your learning path on Airflow. Why not Cloud Composer ? Cloud Composer is a fully managed workflow orchestration service built on Apache Airflow. This scenario supposes you need a stable Airflow instance for a Proof of Concept or for learning space. Well, deploying Airflow on GCP Compute Engine (self-managed deployment) could cost less than you think with all the advantages of using its services like BigQuery or Dataflow. If you are wondering how to start working with Apache Airflow for small developments or academic purposes here you will learn how to.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed